Īlec Radford, Luke Metz, Soumith Chintala. Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. Below we point out three papers that especially influenced this work: the original GAN paper from Goodfellow et al., the DCGAN framework, from which our code is derived, and the iGAN paper, from our lab, that first explored the idea of using GANs for mapping user strokes to images. Please see the discussion of related work in our paper. Recent Related Work Generative adversarial networks have been vigorously explored in the last two years, and many conditional variants have been proposed. This application stretches the definition of what counts as "image-to-image translation" in an exciting way: if you can visualize your input/output data as images, then image-to-image methods are applicable! (not that this is necessarily the best choice of representation, just one to think about.) As a community, we no longer hand-engineer our mapping functions, and this work suggests we can achieve reasonable results without hand-engineering our loss functions either.Ĭolormind adapted our code to predict a complete 5-color palette given a subset of the palette as input. We demonstrate that this approach is effective at synthesizing photos from label maps, reconstructing objects from edge maps, and colorizing images, among other tasks. This makes it possible to apply the same generic approach to problems that traditionally would require very different loss formulations. These networks not only learn the mapping from input image to output image, but also learn a loss function to train this mapping. We investigate conditional adversarial networks as a general-purpose solution to image-to-image translation problems. In each case we use the same architecture and objective, simply training on different data. Image-to-Image Translation with Conditional Adversarial NetsĮxample results on several image-to-image translation problems. High recognition accuracy: Whether it is printed or handwritten, it can perfectly convert pictures into text, and the accuracy rate is as high as 99%.Image-to-Image Translation with Conditional Adversarial Networks Immediately recognize the Japanese text in the picture, and can translate the recognized text into multiple languages including English, Japanese, Korean, French, Spanish, etc.įast recognition speed: For a picture with thousands of words, the text can be extracted in less than three seconds, and the speed is super fast!

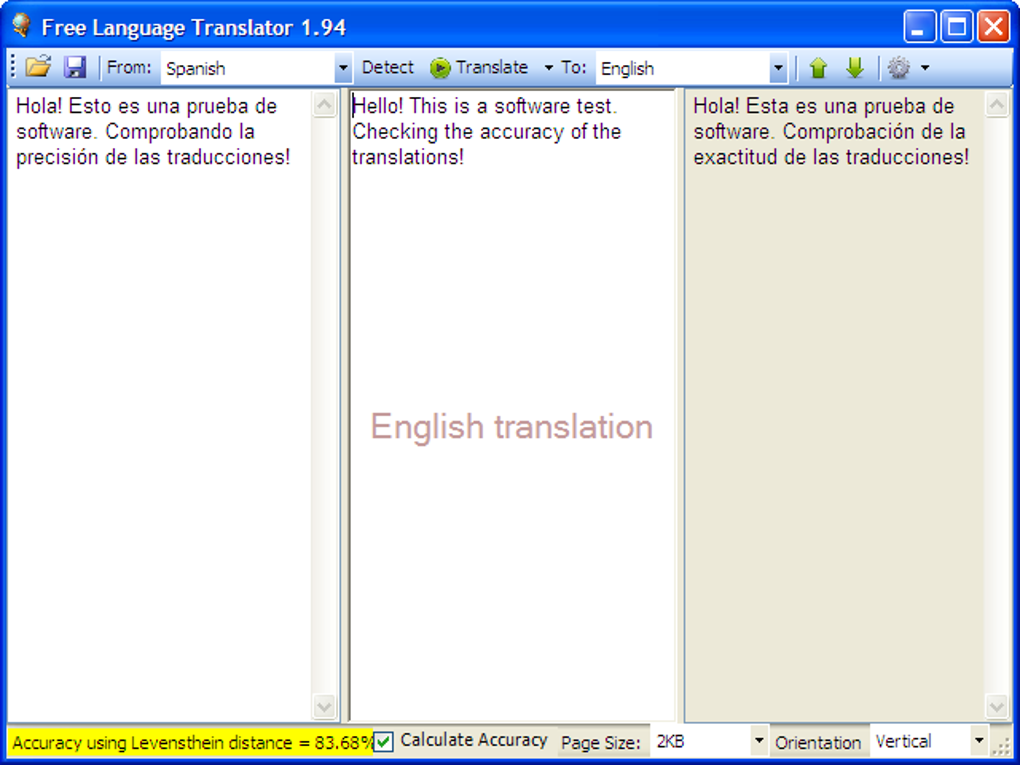

Put an image into the image text recognition software, and use the OCR technology to recognize the text contained in it. Image recognition technology is getting perfect. It determines which character is by detecting the light and shade of the character, and compares it with the character library to output the character. Image recognition is based on the principle of character recognition. If the image text is directly cut, it will not only be unclear, but also cannot be edited until there is a text recognition tool.

Is there something that can convert an image to text? Is there a text recognition tool? There are tons of photos in front of us, and every time the text on the photos is extracted, the head gets bigger.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed